2026 — ITDT LLC uses agentic AI assistance.

By Danny Thornton — ITDT LLC founder and author of the 3D Hidden Line algorithm (1989), in collaboration with Claude Code (2026).

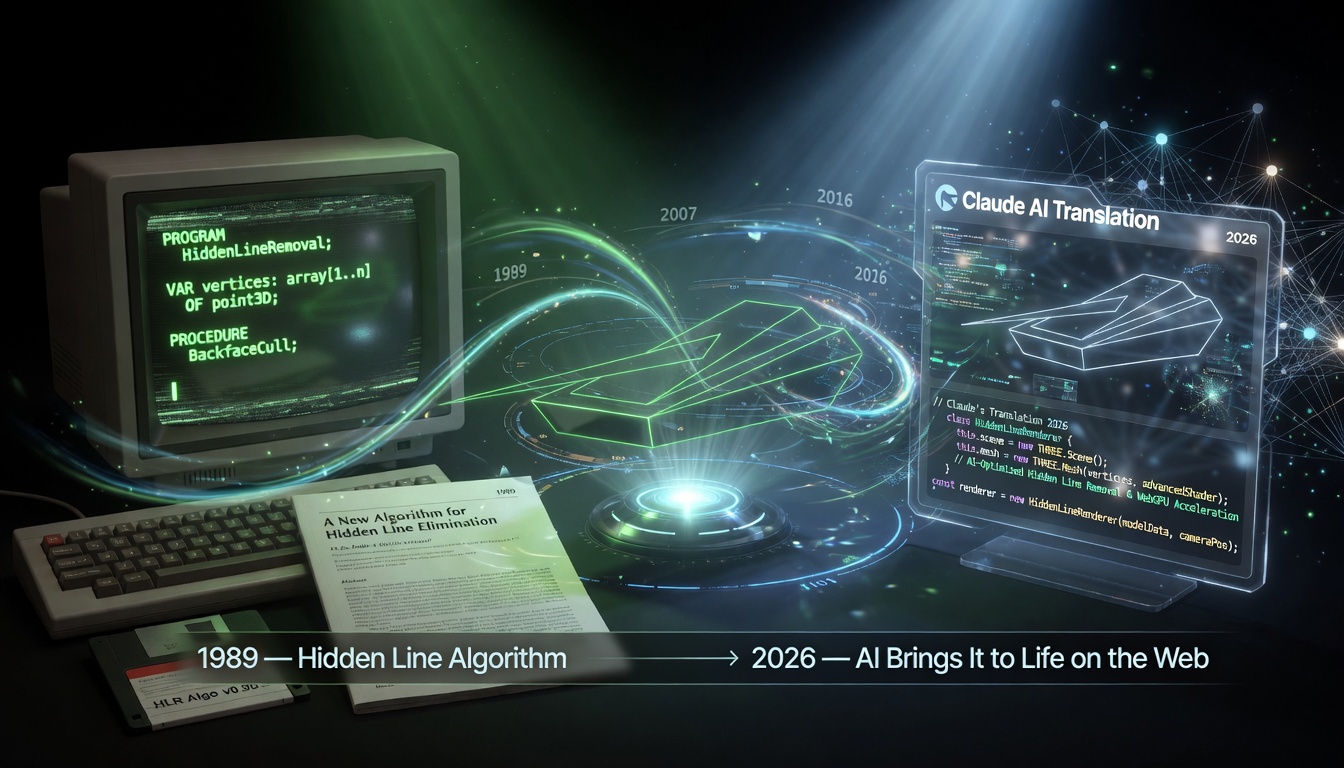

In the spring of 2026, Danny Thornton — the engineer who, as a college senior in 1989, wrote a 3,800-line 3D hidden-line renderer in Turbo Pascal — sat down with an artificial intelligence to bring that thirty-five-year-old code back to life. Claude Code, an agentic AI, faithfully translated the original Pascal, line for line, into JavaScript — with the explicit goal of preserving the original functionality, look, and feel. The result runs live on this site, in any modern browser, doing what the original Pascal did when it ran on EGA monitors and VT240 terminals three and a half decades earlier.

The translation itself is a small thing. People have translated code between languages for as long as there have been languages. What is not a small thing is how the work was done: the AI read the source, reasoned about its structures, wrote the new version, checked for consistency, and iterated — across seventeen interdependent files of dense pointer arithmetic and recursive boundary traversal — guided at the project level by the original author, but executing each individual step on its own. Thornton then worked with Claude Code to wire the translated code to a browser canvas, rebuild the keyboard-driven user interface, and write the page that you are reading now. Software that can do all of that, in collaboration with a human, is new. This page is one small artifact of an early moment in its existence.

— 2026 —

For most of computing history, translating code between languages was a human job — tedious, error-prone, and demanding deep understanding of both the source language and the intent buried in every structure. Automated tools could handle simple syntactic conversions. Anything involving complex logic, custom data structures, or subtle numerical behavior required a programmer who could read the code, not merely parse it.

The arc of AI progress toward that kind of comprehension was long and uneven. Early AI systems in the 1980s and 1990s ran on explicit rules — they could match patterns, but they had no model of meaning. Machine learning in the 2000s introduced systems that could learn from data, but they were narrow: good at classification, poor at reasoning. Deep learning in the 2010s brought dramatic gains in perception — image recognition, speech, translation between human languages — but general code understanding remained out of reach.

The shift came with large language models. Trained on vast quantities of code alongside human text, these models developed something closer to genuine comprehension: the ability to follow logic, infer intent, recognize idioms, and reason about behavior rather than just surface syntax. By the early 2020s, AI assistants could answer questions about code, suggest completions, and explain what a function did.

The leap that made a project like this translation possible was something different again: agentic AI. AI that does not merely answer questions but takes sustained, multi-step action toward a goal. Claude Code is an agentic system. It can read a file, reason about its structure, decide how to map constructs across languages, write the output, check the result, notice its own mistakes, and iterate. It does not wait to be told what to do at every step. It holds a goal, builds a plan, and executes across dozens of interdependent files.

The translation of this Pascal codebase was not a single prompt. It required understanding the layered unit dependencies, preserving the pointer-based data structures as JavaScript objects, carrying over numerical workarounds whose reasons were buried inside the original logic, and maintaining the behavioral contract of seventeen separate modules. That kind of sustained, context-aware engineering was not something AI could do even a few years before this page was written. The pace of change is not gradual. It is compounding. What seems remarkable today will, very likely, seem ordinary soon.

For ITDT LLC, the translation also served a practical purpose: a controlled test of whether agentic AI was ready to do real engineering work on the company's behalf. The verdict: agentic AI is here and capable, and ITDT LLC is using it.

— 1989 —

The original code was written by a college senior, Danny Thornton, on hardware that today's readers may have to look up. There was no internet to search. No Stack Overflow. No PDF of a graphics textbook a click away. There was a campus library, an EGA monitor on a PC, a VT240 terminal connected to a VAX, a printer for the listing, a copy of the Turbo Pascal manual, and the inside of one student's head.

By 1989, the published computer-graphics literature already contained landmark hidden-surface and clipping algorithms: Roberts (1963), Appel's quantitative-invisibility algorithm (1967), the Newell–Newell–Sancha painter's algorithm (1972), Catmull's z-buffer (1974), the Weiler–Atherton polygon clipper (1977), and BSP trees (Fuchs / Kedem / Naylor, 1980). The author had consulted none of them. Without knowing those works existed, he independently arrived at solutions structurally similar to several of them — and composed them in ways that are genuinely distinctive.

This is what serious software engineering looked like before the network became a substitute for memory: an undergraduate, sitting alone, deriving by hand what graduate students elsewhere were publishing in journals.

The algorithm is object-space, front-to-back, polygon-clipping hidden-line removal:

DISTANCE_TO_PLANE), so the closest polygon to the viewer comes out first.This was not a toy. By the standards of an undergraduate project in 1989, the codebase represented a remarkable amount of careful engineering:

The typical CS senior project of that era was a game, a parser, a small database, or a wireframe rotator. This was a full hidden-line system with sorting, clipping, segment classification, and recursive boundary traversal. It was graduate-level work, completed before graduation.

Three and a half decades later, that same code is still doing exactly what it was designed to do — now translated to a language and a platform its author could not have imagined in 1989, with an AI partner that did not exist when the original was written.